Artificial Intelligence Audit

In our previous short articles, we discussed why we need trustworthy artificial intelligence and what the principles and values are determined for trustworthy artificial intelligence around the world. In this short article, we will discuss the artificial intelligence audit we need to ensure the trustworthiness of artificial intelligence systems, the types of audit, and the different frameworks of these audits recommended by different groups.

In order to ensure the governance of artificial intelligence systems and to mitigate the risks that may arise from these systems, there is a need for the artificial intelligence systems audit. This audit, called artificial intelligence or algorithm audit, has two types in terms of shape: internal audit (first party audit) and external audit (second and third party audits). Internal audit is a self-executed audit of the company [1]. External audit can be performed as a second party audit or a third party audit. While “second party audits are conducted by parties having an interest in the organization, such as customers, or by other individuals on their behalf, third party audits are conducted by independent auditing organizations, such as those providing certification/registration of conformity or governmental agencies.” [1]. External auditing is recommended in the context of the EU's General Data Protection Regulation (GDPR) and its interpretations (eg the EU's Automated individual decision making and Profiling Guidelines) [2]. The EU High Level Expert Group Ethics Guidelines for Trustworthy AI points out that auditability is a requirement for reliable AI and states that independent internal and external audits are necessary [2]. Another type of audit that stands out in terms of content is the compliance audit. Compliance auditing is an independent examination of an organization's adherence to regulatory guidelines [3]. It is possible to carry out the compliance audit both internally and externally.

Different frameworks have been proposed for the artificial intelligence systems audit. One of these frameworks is the independent auditing of artificial intelligence systems. This framework was proposed by a multidisciplinary and international team in an article titled “Managing AI security through independent audits” to ensure the reliability of AI systems. Independent auditing is a consensus-based, transparent and stakeholder-involved process to transform existing laws, guidelines and best practices into implementable, measurable, and binary (compliant/non-compliant) audit rules [4]. Auditing is expected to be consistent with three governance principles:

“1) Prospective reviews before highly automated systems are implemented,

2) Audit trail to analyze errors and assist in assessing accountability, and

3) System adherence to judicial requirements” [4].

Insurers, courts and government agencies are three potential practitioners of auditing [4].

Although no independent audit of AI systems has been conducted so far, an independent audit model has been created by ForHumanity. ForHumanity has translated existing laws, guidelines and best practices into binary audit rules in a transparent and crowdsourced manner [5]. The UK's General Data Protection Regulation (GDPR) and the Age Appropriate Design Code are the examples of the laws have been translated into audit rulet by ForHumanity [5]. To summarize briefly, the creation of audit rules for the independent audit model has been processing as follows: The laws were transparently translated into binary audit rules by a group of people who wanted to be involved in the process and were sent to the Information Commission Office, which is the data authority of UK, for approval. This process has been iterative, meaning the Information Commissioner's Office (ICO) has provided feedback, sending the rules back and asking for them to be revised. After the audit rules are accepted by ICO, independent auditing can be conducted based on these rules.

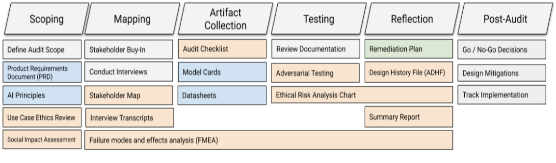

There are different frameworks proposed for internal auditing. One of these was proposed by researchers associated with Google and Partnership on AI in the article “Closing the AI Accountability Gap: Defining an End-to-End Framework for Internal Algorithmic Auditing”. This framework allows companies and engineering teams to audit AI systems before they are implemented. As shown in the figure below, the framework consists of six different stages:

“1) Scoping,

2) Mapping,

3) Artifact Collection,

4) Testing,

5) Reflection and

6) Post-Audit” [6]:

Gray sections indicate a process, while colored sections represent documents that must be produced as part of the audit. Orange documents are produced by the auditors, blue documents are produced by the engineering and product teams, and green printouts are jointly developed [6]. Another proposed framework for internal auditing is the COSO ERM framework proposed by the Committee of Sponsoring Organizations (COSO). The framework consists of five components:

“1) Governance and Culture,

2) Strategy and Objective-Setting,

3) Performance,

4) Review and Revision, and

5) Information Communication and Reporting” [7].

As can be seen in the figure below, these components are based on 20 principles [7]:

Another framework is the General Data Protection Regulation audit. GDPR audit can be considered as a kind of compliance audit. It is possible to carry out this audit both internally and externally. Many consulting companies offer external GDPR audit services and many institutions have published a GDPR Audit Checklist for internal GDPR auditing. General topics included in one of these lists are: “Personal data, Scope of application, Lawful grounds for processing, Transparency requirements, Other data protection principles and accountability, Data subject rights, Data security, Data breaches, International data transfers, Other controller obligations, Other processor obligations” [8]. Another list focuses on: “Governance, Risk management, GDPR project, Data protection officer, Roles and responsibilities, Scope of Compliance, Process analysis, Privacy information management system, Information security management system, Rights of data subjects” [9].

Writer : Gamze Büşra Kaya

References

[1] ISO 19011:2018. 2018. Guidelines for Auditing Management Systems. Standard. International Organization for Standardization.

[2] G. the Supreme Audit Institutions of Finland, “Auditing machine learning algorithms,” 2 Introduction, 24-Nov-2020. [Online]. Available: https://www.auditingalgorithms.net/Introduction.html. [Accessed: 22-Mar-2022].

[3] G. the Supreme Audit Institutions of Finland, “Auditing machine learning algorithms,” Technical terminology, 24-Nov-2020. [Online]. Available: https://www.auditingalgorithms.net/technical-terminology.html. [Accessed: 22-Mar-2022].

[4] G. Falco, B. Shneiderman, J. Badger, R. Carrier, A. Dahbura, D. Danks, M. Eling, A. Goodloe, J. Gupta, C. Hart, M. Jirotka, H. Johnson, C. LaPointe, A. J. Llorens, A. K. Mackworth, C. Maple, S. E. Pálsson, F. Pasquale, A. Winfield, and Z. K. Yeong, “Governing AI safety through independent audits,” Nature Machine Intelligence, vol. 3, no. 7, pp. 566–571, 2021.

[5] R. Carrier, M. Potkewitz, S. Clarke, M. Krebsz, and S. Narayanan, “Comments of ForHumanity In the Matter of Artificial Intelligence Risk Management Framework,” AI RMF RFI Comments - For Humanity. [Online]. Available: https://www.nist.gov/system/files/documents/2021/09/17/ai-rmf-rfi-0107.pdf. [Accessed: 22-Mar-2022].

[6] I. D. Raji, A. Smart, R. N. White, M. Mitchell, T. Gebru, B. Hutchinson, J. Smith-Loud, D. Theron, and P. Barnes, “Closing the AI accountability gap,” Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 2020.

[7] “Www.coso.org.” [Online]. Available: https://www.coso.org/Documents/Realize-the-Full-Potential-of-Artificial-Intelligence.pdf. [Accessed: 22-Mar-2022].

[8] “GDPR audit checklist - Taylor Wessing's Global Data Hub,” Home - Taylor Wessing's Global Data Hub. [Online]. Available: https://globaldatahub.taylorwessing.com/article/gdpr-audit-checklist. [Accessed: 22-Mar-2022].

[9] “GDPR compliance audit,” IT Governance. [Online]. Available: https://www.itgovernance.co.uk/gdpr-compliance-audit. [Accessed: 22-Mar-2022].